A history of computing

Computing used to happen exclusively in "computer rooms". Now computers sit on desks or in our laps, in our pockets, on our wrists, in our cameras, door locks, coffee makers, and even yoga mats. Other computers labor silently in 100 acre server farms that may contain more than a hundred thousand servers, all of which have Internet access. How did we get here?

In 1968 a “mini” computer such as the DEC PDP-12 (1st photo) was the size of a refrigerator.

and had a max of 32K

12bit words of memory.

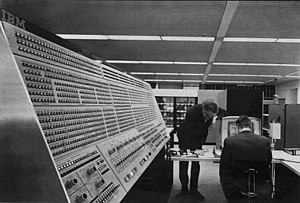

A “mainframe” computer (2nd photo)

filled a large room.

Computers required specialists to operate and were typically used for tasks like

business accounting, research data analysis, and cryptography. The notion of a

“personal” computer was nonsensical. Today many people have a smartphone in

their pocket or purse that can outperform an old fashioned mainframe.

These transformations have been made possible by the confluence of the ever

smaller, faster, and more powerful chips produced in billion dollar digital

chip fabs, and the development and continued expansion of the Internet,

the Web and more recently the “Cloud”.

and had a max of 32K

12bit words of memory.

A “mainframe” computer (2nd photo)

filled a large room.

Computers required specialists to operate and were typically used for tasks like

business accounting, research data analysis, and cryptography. The notion of a

“personal” computer was nonsensical. Today many people have a smartphone in

their pocket or purse that can outperform an old fashioned mainframe.

These transformations have been made possible by the confluence of the ever

smaller, faster, and more powerful chips produced in billion dollar digital

chip fabs, and the development and continued expansion of the Internet,

the Web and more recently the “Cloud”.

The first easily accessible personal computer, the Apple-II, came out in 1977. It

was about the size of a briefcase and had between 4K and 48K bytes of memory. The

basic one cost $1300. People who otherwise could never expect to see, let alone

program and operate a computer, could own an Apple-II and experiment with it to

their hearts content. The IBM PC, aimed at business users, debuted five years

later. These first PCs had woefully little software and could communicate with other

computers only by exchanging floppy disks or by slow dial-up phone connections.

Computers in business and research facilities, however, needed to communicate

rapidly with each other. So the US Government funded the development of

ARPANET, a precursor of the

Internet in April, 1969. That network expanded to 213 host computers by

1981, with another host connecting approximately every twenty days. But

individual personal computers were unaffected by most such changes and

the growth of PCs remained relatively sedate. Until...the world's

first website

went live to the public in 1991. By 1995 there were an estimated 23,500, and by

June of 2000 there were about 10 million. Today there are over 1.3 billion.

Visionaries at Apple further accelerated growth by delivering the iPod and iTunes in 2001. Other visionaries released Skype for video communication in 2003. Computing wasn't just for boring desktop applications anymore! Small computers could live in our pockets and people could communicate by video with other people anywhere in the world over the Internet. In July, 2004, Newsweek put Steve Jobs on the cover and declared “America the iPod Nation”.

Facebook was first to demonstrate the potential of digital “friends” in 2004.

Twitter sent the first tweet March 21, 2006. Mobile computing took off with the

iPhone in 2007 and Google's me-too Android OS smartphones appeared the same year.

Each year thereafter many new devices came to market, new apps became popular,

and new social media sites came on-line. The digital ecosystem was permanently

transforming our societies and economies as computing continued to evolve.

- Physical computers depend upon the techniques and economics of the giant chip "fabs" that make CPU chips, memory chips, and I/O devices.

- Operating Systems (OSs) depend upon the architecture of a CPU, its instruction set and the way it connects to memory and I/O devices.

- The physical infrastructure of the Internet brgsn with copper cables, then evolved to use optical fiber cables, various wifi and longer-range wireless connections, long range microwave connections, and satellite connections.

- The logical architecture of the Internet was defined by the Domain Name Server (DNS) definitions, and the Internet Protocols -- IPv4, and more recently IPv6

- The techniques for architecting, designing, and coding software systems needed to grow as well. They include language, compiler, and interpreter design, and other software tools used to code the Operating Systems that operate the computer and the applications that run on the computer.

- The variety and usability of the many services made available in “The Cloud” had to grow as well – among them Google search, online retail (e.g., Amazon) and co-located computing for rent. Ways of finding desired services were also needed.

- Then Server Farms, e.g., Google's, Facebook's, and Digital Reality Trust's, gatherer and analyze the masses of data left hither and yon by end users as they browse or otherwise interact with sites on the web.

The hardware and software issues in the above list are in turn affected by the sociology of the use of computers and networks, i.e., why and how one connects with other computers and how easy/cheap it is to do so, what one does with a computer and why, what's “cool”, what's useful, what are the financial, legal, and privacy implications of doing so, and so forth. These issues continue to evolve rapidly and in turn tend to influence new hardware. For example, an entire subculture uses computers to play compute-intensive games. Their tastes have driven development of very fast CPU chips and and Graphics Processing Units (GPUs, e.g., from NVIDIA). Yet another subculture focuses on exchanging gossip via social media. That causes Facebook to build the largest server farms on the planet. And the IoT feedback loop, pushed by heavy marketing, relies upon how “cool” it is to install all sorts of IoT devices in your “smart home”.

The economies of scale inherent in large chip fabs fueled both sides of a virtuous circle; more than a billion smartphones are sold each year and the activity of smartphone users creates vast amounts of data that, if analyzed properly, becomes valuable information about their users. The potential value such data can offer creates demand for more than 10 million servers to be bought and installed per year in huge data centers. The economics of digital ecosystems are self reinforcing.

The sheer scale of the digital ecosystem has fueled two new types of computing: Cloud

computing, and Big Data analysis. Every day,

we create 2.5

quintillion bytes of data. This data, properly

analyzed, can give us insights into how the world works, how

people make decisions, how traffic can be better managed, how

an individual driver can avoid traffic, how consumer

preferences are changing, and how voters are likely to vote.

But Big Data analysis takes massive compute power.

Enter The Cloud! Of course large

enterprises may have their own server farms for doing Big Data

analysis and their own software developers to craft the

analytics programs. However smaller firms and even individuals

can rent cloud compute power quite cheaply to use analytics

that require many servers. Also, the rapid growth of a program

development ecosystem, based on

tools such as Docker, makes it quite easy to

build and run analytics software on a wide range of servers

with minimal cost and effort. Thus the explosion of personal

mobile computing generates Big Data and the rapid growth of

the size, power, and ease of use of the Cloud is fueling an

explosion of Big Data analysis.

The term “cloud” was, in effect, simply a rebranding of the Internet. But it seems to have first been stated publicly by Eric Schmidt of Google, August 9, 2006, at a Search Engine Strategies Conference. He said:

"... there is an emergent new model, and you all are here because you are part of that new model. I don't think people have really understood how big this opportunity really is. It starts with the premise that the data services and architecture should be on servers. We call it cloud computing - they should be in a “cloud” somewhere. And that if you have the right kind of browser or the right kind of access, it doesn't matter whether you have a PC or a Mac or a mobile phone or a BlackBerry or what have you - or new devices still to be developed - you can get access to the cloud. There are a number of companies that have benefited from that. Obviously, Google, Yahoo!, eBay, Amazon come to mind. The computation and the data and so forth are in the servers."Amazon's EC2/S3 was first to explicitly monetize the notion of data centers as “cloud” computing. IBM had done similar things in customized contracts but Amazon sold virtual servers to any and all comers.

Google's current types of web crawling and analysis would be impossible without the orders of magnitude improvements in Internet speeds, and data center size and performance that have occurred in recent years. Cloud computing would be impractical too. And such new devices as Virtual Reality Headset and their applications simply would not exist without advances in chip density and displays.

Server farms, games, and IoT coolness aside, perhaps the simplest way to summarize the history of computing and its impact on the world is to note that that in 2016 Global data centers used about 3% of the world's total electricity. Moreover, from the vacuum tube gates of WW-II, to the latest 5 nanometer chip features, the power used to execute one machine instruction has decreased at least a million-fold and the speed has increased by a similar ratio, thus the computing accomplished per unit of electric power has increased a trillion-fold. And digital history is accelerating.

What might the future hold?Last revised 5/11/2019